Over the past month there have been a number of package updates in the deducer ecosystem. Deducer is a general purpose, extensible, data analysis GUI. It is designed to be a free easy to use alternative to proprietary data analysis software such as SPSS, JMP, and Minitab. It has a menu system to do common data manipulation and analysis tasks, and an excel-like spreadsheet in which to view and edit data frames.

More information is available in the online manual:

http://www.deducer.org/pmwiki/pmwiki.php?n=Main.DeducerManual

And there is an intro video in youtube:

Deducer 0.6-3

The main change in Deducer 0.6-3 is an update to the (award winning) Plot Builder GUI to make use of the new features in ggplot2 0.9-0.

New plot builder features: Part 1

New plot builder features: Part 2

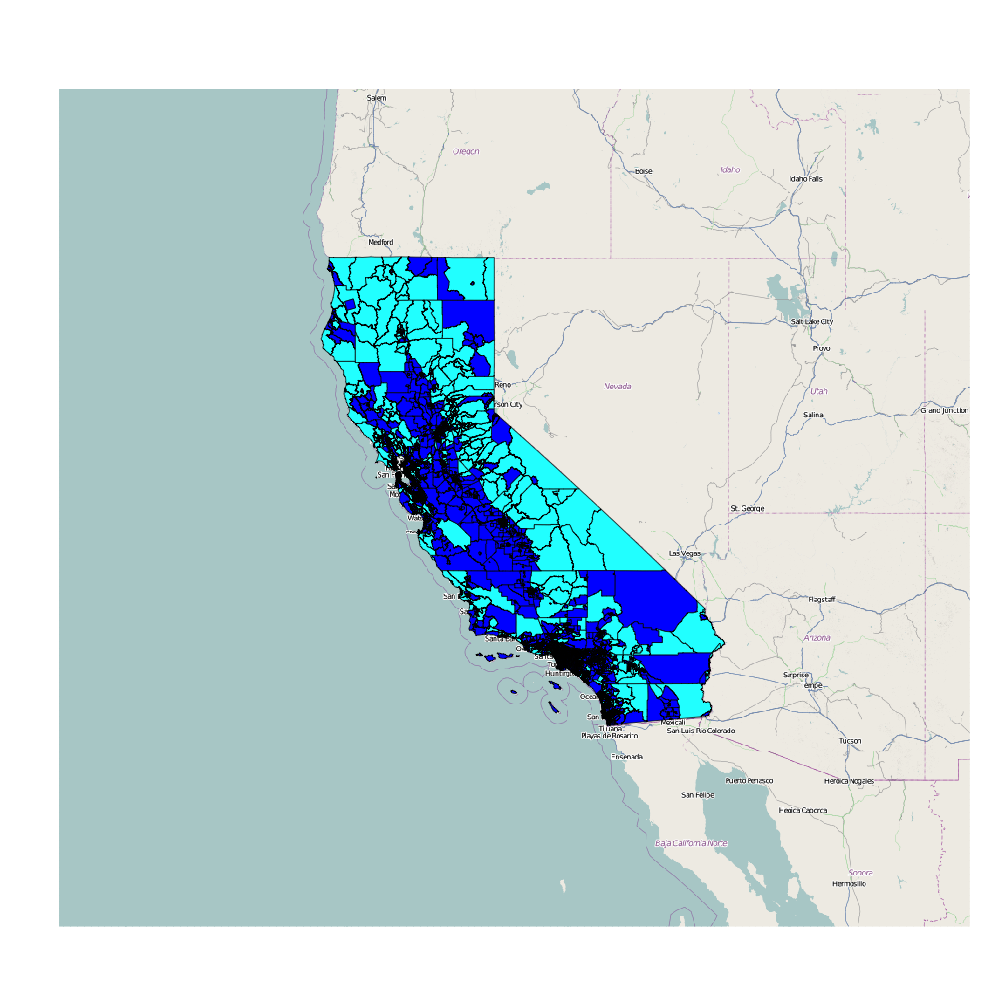

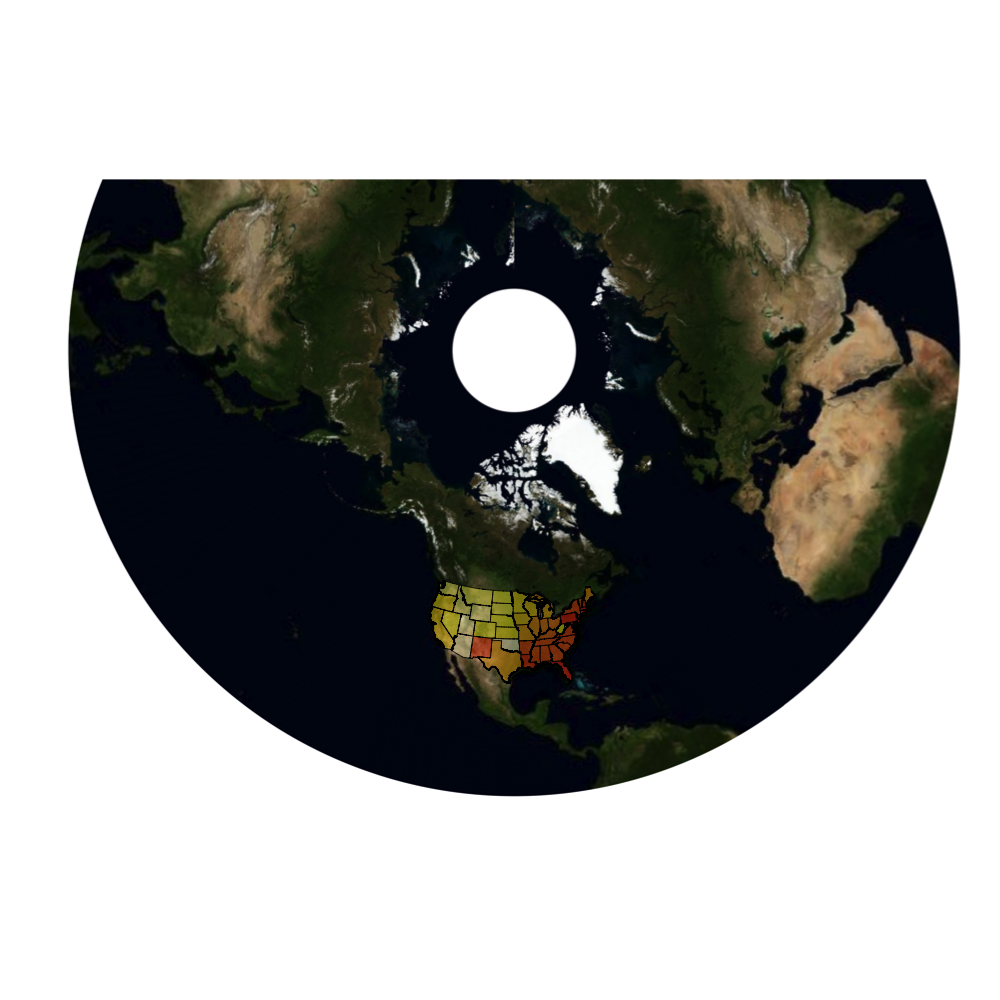

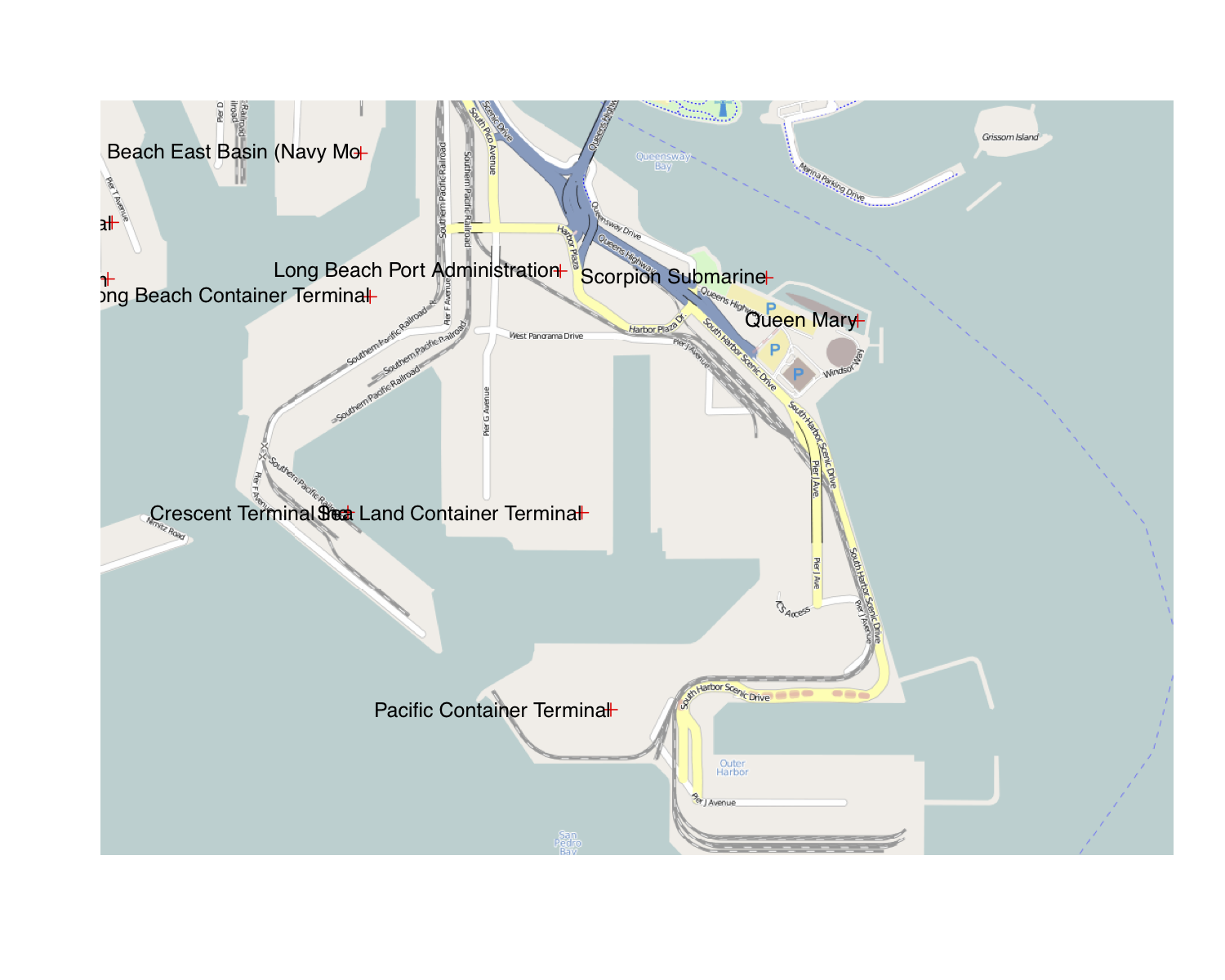

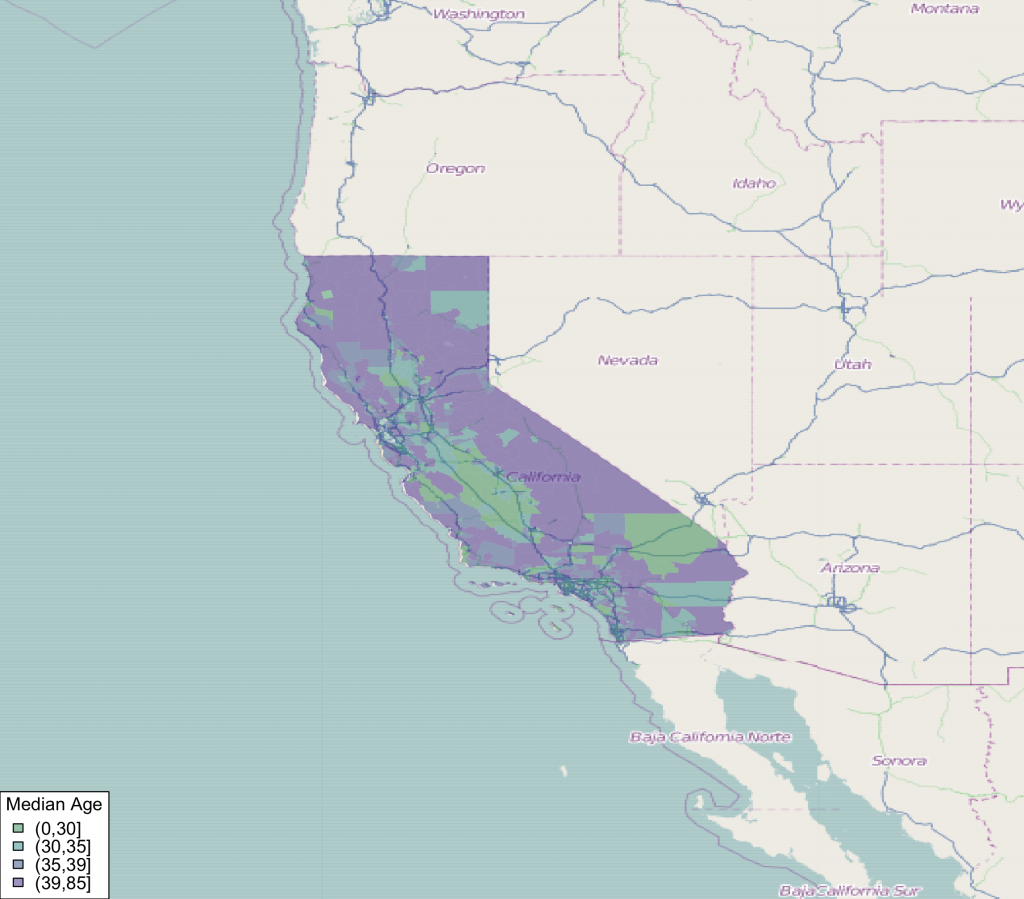

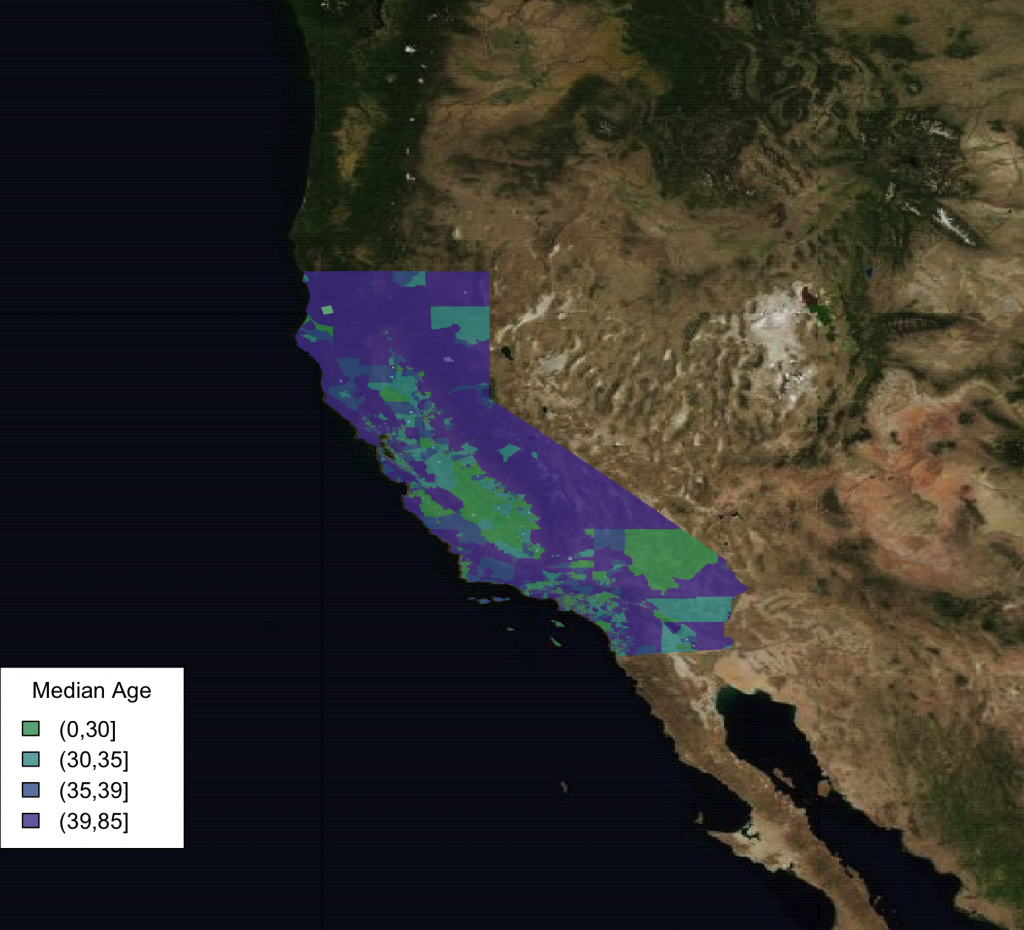

DeducerSpatial 0.4

A Deducer plug-in for spatial data analysis. Includes The ability to plot and explore open street map and Bing satellite images. Currently there is not much here in terms of heavy data analysis, but there are some great tools for importing and exploring spatial data.

DeducerPlugInScaling 0.1-0

A Deducer plug-in for factor analysis, reliability analysis and discriminant analysis.

Version 0.1-0 includes some general improvements as well as a dialog for linear discriminant analysis (thanks to Helios De Rosario-Martinez).

http://www.deducer.org/pmwiki/pmwiki.php?n=Main.LinearDiscriminantAnalysis

DeducerExtras 1.5

Added functionality for Deducer. This package includes additional dialogs for calculating distribution function values, cluster analysis, and more.

Version 1.5 includes some general improvements along with a new dialog implementing Friedman’s test, and Kendall’s W test (thanks to Helios De Rosario-Martinez).

http://www.deducer.org/pmwiki/pmwiki.php?n=Main.RankingAnalysis